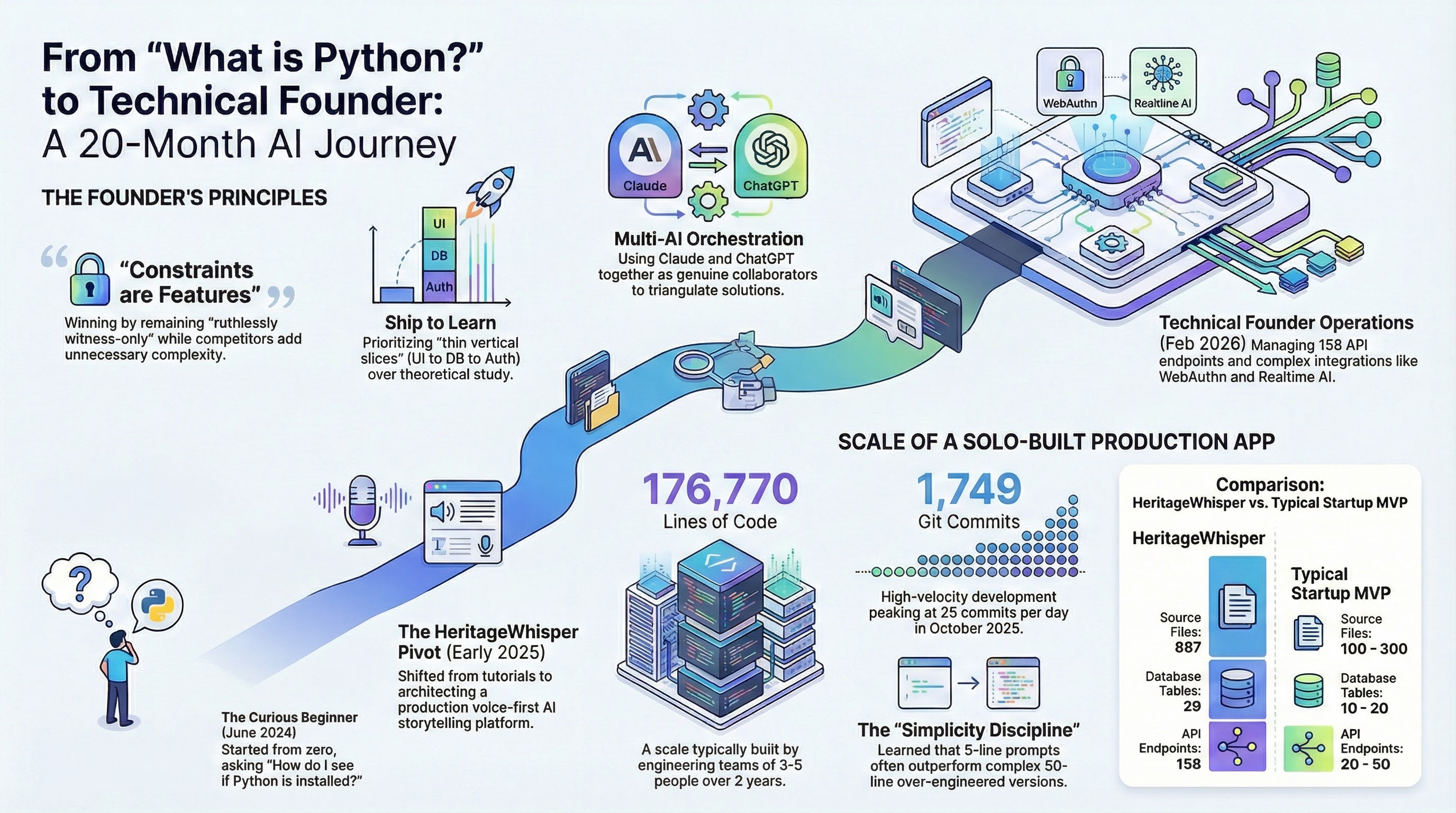

Two years deep inside AI tools, automation, local models, and AI-assisted development. Not from the sidelines, by building, breaking, shipping, and comparing what works in real workflows.

I wanted both. I already knew sales, operations, enablement, incentives, customer experience, and executive strategy. The missing piece was understanding what AI could actually do when you push it hard.

This page is the receipts on that learning curve.

Understanding feasibility and breaking the "magic" barrier.

Rapid experimentation. Learning by shipping. Breaking things.

Production-grade architecture, security, and strategic constraint.

Scale, SEO, advanced automation, and "AI Leader" operations.

No bootcamp, no CS degree, no playbook. 17 months of testing every frontier tool, burning through tokens, and building a local cluster to run it all.

Where 17 months of frontier testing actually went.

Most "AI users" live in one app. Real fluency means knowing when to reach for which tool, and which to leave behind.

A 50/50 split between active and dropped tools isn't churn. It's how you learn what actually earns its place in a workflow.

Volume isn't taste. The dropped column is where the taste lives.

$15K+ in hardware. Networked, orchestrated, running 24/7. Most AI users rent. This stack runs at home.

The long prompt is way worse than the short prompt.

After testing Pearl AI. Complexity was hurting performance.

Pearl can not have any opinions. Is that clear in here?

Defining the AI as a "witness," not a therapist.

Let's stop the incremental patches. The system is fundamentally broken.

Recognizing architectural debt. The shift from "Help me make X" to systematic engineering.

If it runs 24/7, treat it like production infrastructure.

Feb 2026, while hardening OpenClaw automation.

Heritage Whisper is my current focus: a voice-first platform helping families preserve stories across generations.

If you have questions about this journey, want to share your own experience, or just want to connect, I'd love to hear from you.